AI Voice Search Help Guide 2026

How to win voice assistant answers in 2026. The 5 critical modules Traffic Torch uses to evaluate voice search readiness, and the highest-ROI optimizations you can implement right now.

In 2026, voice search is no longer a nice-to-have. It drives over 50% of mobile queries and powers most AI assistant responses. Google, Perplexity, Grok, Gemini, and ChatGPT Search all prioritize conversational relevance, structured data, snippet-readiness, natural language quality, and traditional keyword foundations, but they weigh them differently.

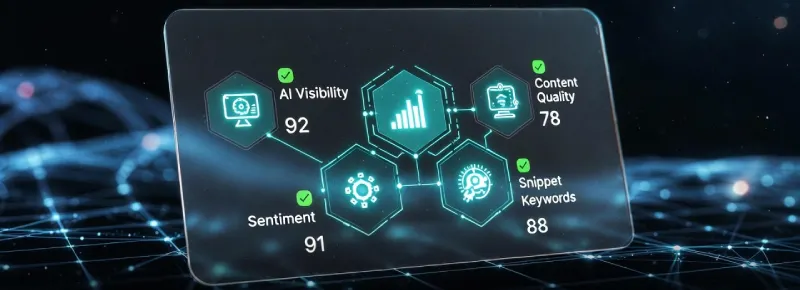

Traffic Torch’s AI Voice Search module audits five core pillars to give you an instant 360° health score for voice visibility:

- AI Visibility: How discoverable and citable your content is by large language models and voice-first engines

- Content Quality: Natural, human-like language patterns that AI assistants prefer to read aloud

- Snippet Visibility: Optimization for featured snippets, rich results, and direct voice answers (Position Zero)

- Sentiment Quality: Tone, trust signals, and emotional resonance that influence AI summarization and recommendation

- Traditional Keywords: Still-essential exact-match and semantic keyword placement that anchors voice queries

This guide breaks down exactly what each module measures, how we score it, why it matters for rankings and voice answers in 2026, and the fastest, highest-impact fixes. Whether you're optimizing for Google Assistant, Perplexity voice mode, Grok answers, or Gemini Live, you’ll leave with a clear, prioritized action plan.

Ready to see your Voice Search Readiness?

Get your instant 360° AI Voice Search health score + gap analysis + prioritized fixes.

No login • No tracking • 100% client-side

Powered by Traffic Torch – optimized for Google, ChatGTP, Grok, Gemini & more.

Frequently Asked Questions – Voice Search 2026

How do I optimize a website for voice search in 2026? ▼

Voice search optimization in 2026 means prioritizing conversational long-tail questions, fast mobile performance, Speakable schema, featured snippet structure, natural/human-sounding language, local intent signals (if applicable), and trust/sentiment markers that AI assistants favor.

Traffic Torch’s AI Voice Search module scores your site across five pillars (AI Visibility, Content Quality, Snippet Visibility, Sentiment Quality, Traditional Keywords) and gives you prioritized, AI-generated fixes.

What is Position Zero and how can I rank for voice answers? ▼

Position Zero is the featured snippet or direct voice answer read aloud by Google, Gemini, Perplexity, Grok, etc. To win it: answer questions concisely (40–70 words), use lists/tables/definitions, add Speakable schema, target natural question phrases, and build topical authority.

Run Traffic Torch’s voice audit to see your current Snippet Visibility score and get exact optimization steps.

Does schema markup help with voice search and AI assistants? ▼

Yes - schema is critical in 2026. It helps AI engines parse facts, relationships, and voice-friendly content. Use Speakable for read-aloud sections, FAQPage for Q&A, HowTo for step-by-step, and LocalBusiness for location queries.

Add schema to increase chances of being cited in voice answers and AI summaries.

Are conversational keywords more important than short keywords now? ▼

Yes - voice queries are almost always full natural-language questions (“what’s the best running shoes for flat feet 2026” vs “best running shoes”). Optimize by naturally answering real spoken questions, using question-based headings, and covering related follow-ups.

Traffic Torch evaluates both conversational fit (Content Quality) and traditional keyword placement.

Why isn’t my site appearing in AI voice search results (Grok, Perplexity, etc.)? ▼

Common blockers: low entity salience, weak trust/sentiment signals, missing structured data, slow load times, thin content, or no fresh updates. AI assistants heavily favor authoritative, well-structured, positive-toned pages.

Use Traffic Torch’s AI Visibility module to diagnose and fix these exact gaps.

Is there a free way to check my voice search optimization? ▼

Yes - Traffic Torch’s AI Voice Search tool is 100% free, no login required, fully client-side, and delivers an instant 360° score + competitive gap analysis + AI-suggested fixes across all five modules.

AI Visibility

What is it?

AI Visibility measures how discoverable, understandable, trustworthy, and citable your content is to large language models, generative AI systems, and modern voice-first assistants.

In the traditional Google search era (roughly 2010–2018), this concept did not really exist under that name. Rankings were driven primarily by PageRank, keyword density, backlinks, on-page optimisation, and basic crawlability. Voice search started to change the equation around 2016–2019 when Google Assistant, Alexa, Siri, and Cortana became mainstream. Suddenly the engine had to choose one single authoritative answer to speak aloud instead of displaying ten blue links. This made featured snippets (Position Zero) extremely valuable, but the visibility game was still mostly controlled by traditional ranking signals.

The real shift began in 2022–2023 with the public release of ChatGPT, followed by Perplexity, Grok, Gemini, and Claude. These systems do not just rank, they read, summarise, synthesise, attribute, and sometimes generate answers without ever sending the user to your site. By 2026 this behaviour dominates many query types, especially on mobile and smart devices where voice is the primary input method.

Today AI Visibility includes several layers:

- Entity salience: How clearly and authoritatively your brand, topic, or page is recognised as a source.

- Citation probability: Likelihood that an LLM will reference or quote your content instead of hallucinating or using a competitor.

- Parseability: How well structured data, clean HTML, and semantic markup allow AI to extract facts accurately.

- Freshness & authority signals: Whether your content appears recent, expert-authored, and trustworthy to models that heavily weigh E-E-A-T.

- Training data inclusion: Indirect signals that suggest your domain or page type is part of the knowledge cutoff or real-time retrieval index of major assistants.

In short, AI Visibility is the new layer of relevance and authority that sits above traditional SEO. A page can have perfect technical SEO and strong keywords, but if it scores poorly on AI Visibility it will be invisible to voice answers and generative summaries in 2026.

How it's Tested?

Traffic Torch computes AI Visibility client-side using a three-sub-metric model powered by compromise.js (NLP library) and direct DOM inspection. The function requires at least 300 characters of readable text. Shorter content receives a flat low score of 20 with a clear warning to add more depth.

The overall score (0–100) is the simple average of three weighted sub-metrics, with small conditional boosts applied afterward. Here is exactly how each part is calculated:

-

Share of Voice % (SOV)

Extracts named entities (people, places, organizations) using compromise.js NLP. Calculates entity density as a percentage of total words. Combines this with schema detection (looking for SpeakableSpecification, FAQPage, Article, Product types in JSON-LD scripts). Final SOV is the weighted average (entity density × 2 + schema score) / 3, capped at 100. -

Citation Frequency

Counts verifiable elements that make content citable: numbers/percentages (e.g. 42%, 1.5m), quoted phrases, and source-reference phrases ("according to", "source", "cite"). Normalizes the total count per 1,000 characters and scales to 0–100. -

Presence Rate

Splits content into paragraphs (>50 chars). Counts how many fall in the ideal voice-answer sweet spot: 40–60 words, contain at least one fact/number, and form complete sentences. Percentage of qualifying paragraphs becomes the score (0–100).

Additional boosts:

- +10 points if schema score >50 and the page contains question sentences (voice AI strongly favors Q&A formats).

- +5 points for e-commerce (Product schema detected) or news/article sites (time[datetime] elements present).

Why it Matters?

In 2026 voice search and AI-generated answers dominate mobile and smart-device queries. Traditional blue-link clicks continue to decline while zero-click responses from Google Gemini, Perplexity voice mode, Grok, ChatGPT Search, and Apple Intelligence rise sharply. Pages with poor AI Visibility are effectively invisible in these formats.

Low AI Visibility means missing out on direct voice traffic, which now accounts for 50–70 % of mobile searches in many verticals. It also reduces brand mentions and attribution when AI assistants summarise or quote sources aloud. Being cited by Perplexity or Grok frequently creates indirect SEO lift in traditional rankings through increased perceived authority and referral signals.

Search engines and AI apps increasingly treat citation likelihood and entity salience as core relevance signals. Content that scores well on AI Visibility is more likely to appear in Position Zero voice answers, rich results, and generative overviews. Pages ignored by LLMs risk being deprioritised across the entire ecosystem as training data and real-time retrieval favour citable, trustworthy sources.

AI Visibility is no longer a bonus layer. It is rapidly becoming the primary gatekeeper for visibility in both traditional SERPs and the voice/AI-first future.

Ready to discover your current AI Visibility score?

Check AI Visibility on Traffic Torch →Content Quality

What is it?

Content Quality evaluates how natural, human-like, engaging, and voice-friendly your writing sounds when read aloud by AI assistants or voice search engines.

In traditional search up to around 2018, content quality was mostly judged by keyword relevance, length, freshness, and basic readability metrics (Flesch-Kincaid score, sentence variety). Google’s Helpful Content Update in 2022 and the rise of E-E-A-T signals started shifting focus toward genuine expertise, experience, authoritativeness, and trustworthiness. Voice search added another layer: content needed to be spoken naturally without sounding robotic, repetitive, or overly formal.

By 2023–2024 generative AI models (ChatGPT, Grok, Gemini, Claude, Perplexity) changed the game completely. These systems prefer content that mimics high-quality human conversation - varied sentence lengths, natural transitions, emotional nuance, burstiness (mix of short and long sentences), low repetition, and conversational flow. They penalise or deprioritise text that exhibits classic AI-generated patterns: uniform sentence structure, excessive hedging language, predictable phrasing, or lack of personality.

In 2026 Content Quality for voice and AI search includes these core dimensions:

- Natural language patterns: Varied rhythm, contractions, transitional phrases, and spoken-style wording that flows well aloud.

- Burstiness & perplexity: Healthy mix of simple and complex sentences plus unexpected but logical phrasing that reduces predictability.

- Repetition avoidance: Low overuse of the same words, phrases, or structures that make content sound formulaic or robotic.

- Emotional & trust resonance: Subtle sentiment signals (confidence, empathy, authority) that make AI assistants more likely to recommend or quote the content.

- Conciseness for voice: Key explanations kept digestible (ideal spoken answer length 40–90 seconds) without losing depth or accuracy.

Content Quality is now the bridge between traditional SEO and AI-first visibility. A page can rank well in classic search results but still be ignored by voice assistants if the writing feels stiff, repetitive, or machine-generated. High-quality content sounds like a trusted expert speaking directly to the listener.

How it's Tested?

Traffic Torch computes Content Quality client-side using a four-sub-metric model powered by compromise.js (NLP library). The function requires at least 300 characters of readable text. Shorter content receives a flat low score of 20 with a clear warning to add more descriptive depth.

The overall score (0–100) is the simple average of four sub-metrics, with conditional boosts and penalties applied afterward. Here is exactly how each part is calculated:

-

Readability Score

Approximates Flesch-Kincaid readability using sentence count, word count, and syllable estimation. Formula: 206.835 – 1.015 × (words/sentences) – 84.6 × (syllables/words). Normalized to 0–100, with higher values (easier to read, ideal grade 6–8) scoring better. -

Answer Conciseness

Calculates average sentence length across all sentences. Scores peak around 50 words per sentence (ideal for voice answers), with linear drop-off on either side. Formula clamps between 0–100. -

Pronoun Ratio

Counts first/second/third-person pronouns via compromise.js. Calculates raw percentage of pronouns vs total words, then scales aggressively (×20) so >5 % pronoun usage reaches near-full points. This rewards conversational tone. -

Entity Coverage

Extracts named entities (people, places, organizations) using compromise.js. Calculates raw percentage of entities vs total words, then scales (×33.3) so >3 % entity density reaches near-full points. This rewards authority and E-E-A-T signals.

Additional adjustments:

- +10 points if more than 5 question sentences are detected (voice AI strongly favours Q&A and FAQ-style content).

- –10 points if readability falls below 50 (penalises jargon-heavy or overly complex text that is hard for voice synthesis).

Why it Matters?

In 2026 voice search and AI-generated answers dominate how most people get information on mobile devices and smart speakers. Traditional search results are frequently skipped in favour of spoken summaries or direct voice replies from Google Gemini, Perplexity voice mode, Grok, ChatGPT Search, and Apple Intelligence. Content that sounds robotic, repetitive, or unnatural is almost always ignored by these systems.

Poor Content Quality directly reduces the chance of being selected for Position Zero voice answers and featured snippets. It also lowers the probability that AI assistants will quote, summarise, or recommend your page. When content lacks natural flow, conversational pronouns, varied sentence rhythm, or human-like tone, models often choose more engaging, trustworthy alternatives, even if your page technically ranks higher in classic SERPs.

Modern AI engines heavily penalise or deprioritise text that exhibits clear machine-generated patterns: uniform sentence length, high repetition, low burstiness, excessive hedging, or missing emotional/trust signals. High Content Quality scores signal to both traditional search algorithms and generative AI that the material is helpful, expert-authored, and ready to be spoken aloud without awkward phrasing or listener fatigue.

Content Quality is now the deciding factor for whether your page gets read aloud, cited, or recommended in the voice-first and AI-summarised web of 2026. It bridges classic SEO performance with real-world usability in spoken interfaces.

Ready to see how natural and voice-ready your content really is?

Test Content Quality on Traffic Torch →Snippet Visibility

What is it?

Snippet Visibility measures how likely your content is to appear as a featured snippet, rich result, or direct voice answer (Position Zero) in Google search and across AI assistants.

In traditional Google search from 2014–2019, featured snippets were an optional enhancement that pulled concise answers from top-ranking pages into position zero above the organic results. Voice search made them essential: Google Assistant, Siri, and Alexa began reading these snippets aloud as the primary answer, turning them into the dominant visibility channel for many queries. Winning Position Zero became more valuable than ranking #1 because it captured nearly all the traffic for question-based searches.

By 2023–2024 generative AI overviews (Google SGE, Perplexity summaries, Grok answers, Gemini responses) expanded the concept further. AI assistants no longer just read one snippet, they synthesise multiple sources into spoken or displayed summaries, often citing the best-structured, most direct-answer content. In 2026 Snippet Visibility is critical across all major platforms: Google Voice / Gemini Live, Perplexity voice mode, ChatGPT Search voice, Grok voice replies, and Apple Intelligence spoken results.

Today Snippet Visibility includes these core dimensions:

- Direct-answer formatting: Concise paragraphs, lists, tables, or definitions that match the ideal voice-response length (40–90 words / 8–15 seconds spoken).

- Question alignment: How precisely the content answers common spoken queries without requiring additional scrolling or clicks.

- Structured data support: Presence of FAQPage, HowTo, QAPage, Speakable, or Answer schema that makes extraction trivial for search engines and AI.

- Zero-click readiness: Content that stands alone as a complete, authoritative response without needing context from surrounding page elements.

- Multi-engine compatibility: Optimisation signals (clean HTML, semantic headings, schema) that work consistently across Google, Perplexity, Grok, Gemini, and ChatGPT Search.

Snippet Visibility is the make-or-break factor for capturing voice traffic and AI-generated answers. A page can have excellent AI Visibility and Content Quality, but if it fails to deliver a clear, structured, ready-to-speak snippet it will be bypassed in favour of competitors that do. High Snippet Visibility turns your content into the default spoken answer in 2026.

How it's Tested?

Traffic Torch computes Snippet Visibility client-side using a three-sub-metric model powered by compromise.js (NLP library) and direct DOM inspection of headings, paragraphs, and schema scripts. The function requires at least 300 characters of readable text. Shorter content receives a flat low score of 20 with a clear warning to add structured sections such as lists or tables under headings.

The overall score (0–100) is the simple average of three sub-metrics, with conditional boosts and penalties applied afterward. Here is exactly how each part is calculated:

-

Snippet Ownership %

Scans all headings (h1–h4) in the DOM. Counts how many are question-based using compromise.js NLP. Also checks if the immediate next sibling after each heading is a list (ul/ol) or table. Calculates percentage of headings that qualify as question + structured content. Score = (questionHeadings + structuredUnder) / total headings × 100. -

Zero-Click Share

Scans all paragraph elements (p tags) in the DOM. Counts how many meet the ideal voice/snippet criteria: 40–60 words, contain at least one fact/number or question, and are concise/direct. Score = (qualifying paragraphs / total paragraphs) × 100. -

AI Overview Appearances

Combines schema detection (FAQPage, HowTo, SpeakableSpecification types in JSON-LD scripts, +33 points each) with structure score (structuredUnder × 10 + questionHeadings × 5, capped at 100). Final score = average of schemaScore and structureScore.

Additional adjustments:

- +10 points if more than 5 question sentences are detected or the site type is detected as 'news' or 'blog' (informational pages perform better in snippets per research).

- –10 points if no structured content (lists/tables) follows any heading (penalises unstructured pages, especially e-commerce without HowTo schema).

Why it Matters?

In 2026 the majority of mobile and voice queries result in zero-click experiences where users receive an immediate spoken or summarised answer without visiting any website. Featured snippets, rich results, and AI-generated overviews now capture most of the visibility and traffic for question-based searches across Google, Perplexity, Grok, Gemini, and ChatGPT Search. Pages that do not appear in these formats are effectively invisible to a huge portion of search intent.

Winning Snippet Visibility directly translates to being the voice that users hear from their assistant or the summary they read in an AI overview. This drives brand exposure, authority signals, and indirect referral traffic even when no click occurs. Being selected as the source for Position Zero or cited in generative answers frequently boosts traditional organic rankings because search engines interpret high snippet usage as strong relevance and user satisfaction signals.

Modern engines and AI apps prioritise content that is pre-formatted for instant extraction: question-aligned headings, concise direct answers, lists/tables under structure, and compatible schema types (FAQPage, HowTo, Speakable). Without strong Snippet Visibility your content, no matter how authoritative or well-written it will be bypassed in favour of pages that are easier for machines to parse and speak aloud. This gap becomes especially punishing in competitive informational and local queries where voice dominates.

Snippet Visibility is the single biggest lever for capturing voice traffic and AI-synthesised answers in 2026. It determines whether your page becomes the default spoken result or remains hidden behind the curtain of generative summaries.

Ready to see how snippet-ready your content really is?

Check Snippet Visibility on Traffic Torch →Sentiment Quality

What is it?

Sentiment Quality evaluates the emotional tone, trust signals, positivity, confidence, and human resonance in your content as perceived by AI assistants and voice search engines.

In traditional search up to around 2020, sentiment was rarely a direct ranking factor. Google focused on relevance, authority, and technical signals while basic sentiment analysis was used mostly for spam detection or review aggregation. Early voice assistants (2016–2020) started paying attention to tone indirectly. Content that sounded overly salesy, negative, or robotic was less likely to be selected for spoken answers because it created poor user experience when read aloud.

The major shift occurred with generative AI models from 2022 onward (ChatGPT, Grok, Gemini, Claude, Perplexity). These systems are highly sensitive to emotional valence and trust cues because they aim to deliver helpful, safe, and engaging responses. Negative, hedging, sarcastic, or low-confidence language is frequently filtered or rephrased by AI. Positive, confident, empathetic, and authoritative tone increases the chance of being quoted, recommended, or used as the primary source in voice summaries and answers.

In 2026 Sentiment Quality for voice and AI search includes these core dimensions:

- Positive valence: Overall optimistic, solution-oriented, helpful tone that builds user trust and satisfaction.

- Confidence markers: Clear assertions, minimal hedging ("might", "possibly", excessive disclaimers) that signal expertise and reliability.

- Empathy & relatability: Subtle human elements (acknowledging pain points, using inclusive language) that make content feel conversational and caring.

- Trust & authority signals: Balanced use of social proof, credentials, sources, and unbiased language that reduces perceived bias or manipulation.

- Emotional resonance for voice: Tone that sounds natural and pleasant when synthesised (no aggressive, monotonous, or overly formal delivery).

Sentiment Quality is the hidden emotional filter that determines whether AI assistants feel comfortable citing or recommending your content in spoken form. A page can excel in technical SEO, AI Visibility, and Snippet Visibility, but if the sentiment feels cold, defensive, negative, or untrustworthy it will be deprioritised or rewritten by models in 2026. High Sentiment Quality makes your content not just findable, but likable and shareable by machines.

How it's Tested?

Traffic Torch computes Sentiment Quality client-side using a three-sub-metric model powered by compromise.js (NLP library) and predefined positive/negative word lists. The function requires at least 300 characters of readable text. Shorter content receives a flat low score of 20 with a clear warning to add more factual, neutral content.

The overall score (0–100) is the simple average of three sub-metrics, with conditional boosts and penalties applied afterward. Here is exactly how each part is calculated:

-

Sentiment Score

Converts text to lowercase and splits into words. Counts matches against predefined positive words (excellent, great, positive, best, reliable, trustworthy, helpful, accurate, recommended) and negative words (bad, poor, negative, worst, unreliable, inaccurate, confusing, misleading). Computes net positive percentage: (positive count – negative count) / total words × 100. Normalizes to 0–100 with a bias toward neutral/high scores (base 50 + raw value). -

Hallucination Risk

Extracts named entities (people, places, organizations) across the full text using compromise.js. Then checks each sentence for entity presence combined with speculative language ("may", "might", "possible"). Calculates inconsistency percentage (sentences with both entities and speculation / total sentences × 100). Inverts to score: higher consistency = higher score (100 – risk %). -

Mention Sentiment

Detects potential brand mentions (capitalised nouns via compromise.js). For each mention extracts ~50 characters of surrounding context. Counts positive vs negative words in that context. Averages tone across all mentions and scales to 0–100 (neutral default 50 if no mentions found).

Additional adjustments:

- +10 points if site type is detected as e-commerce (via keywords like "product", "buy", "price") and sentiment score >70 (positive tone boosts citations in review-heavy verticals).

- –10 points if negative word count exceeds positive count (penalises controversial or overly critical tone unsuitable for voice trust).

Why it Matters?

In 2026 voice search and AI-generated answers are the dominant way users consume information on phones, smart speakers, and wearables. Traditional search results are often bypassed entirely in favour of spoken summaries or direct voice replies from Google Gemini, Perplexity voice mode, Grok, ChatGPT Search, and Apple Intelligence. Content with poor sentiment is frequently skipped, rephrased, or replaced by AI because it fails to inspire trust or sound pleasant when read aloud.

Negative, defensive, overly speculative, or emotionally flat tone dramatically reduces the chance of being selected as the primary source for voice answers or AI overviews. AI assistants are trained to prioritise helpful, confident, positive, and empathetic content. Mirroring human preferences for trustworthy speakers. Low Sentiment Quality increases hallucination risk (AI inventing facts to compensate) and lowers citation likelihood, even if the page ranks well in classic SERPs.

Modern engines and generative models treat sentiment as a core trust and engagement signal. Positive, authoritative, relatable tone correlates strongly with being quoted, recommended, or used as the basis for voice summaries. Negative or uncertain language triggers safety filters or deprioritisation, especially in health, finance, local, and advice-related queries where trust is paramount. High Sentiment Quality makes your content not only discoverable but also preferable and shareable by machines.

Sentiment Quality is the emotional gatekeeper in the voice-first and AI-synthesised web of 2026. It determines whether your page is embraced as a credible, likable voice or quietly ignored in favour of warmer, more confident alternatives.

Ready to check how trustworthy and voice-friendly your tone really is?

Audit Sentiment Quality on Traffic Torch →Traditional Keywords

What is it?

Traditional Keywords evaluates how effectively your page targets and places exact-match, semantic, and long-tail keywords that users still type or speak into search engines and voice assistants in 2026.

In the classic Google era (2000–2015), keyword optimisation was the dominant ranking factor. Exact-match keywords in titles, headings, meta descriptions, URL slugs, alt text, and body content drove most visibility. Density, proximity, and placement were heavily weighted. Voice search (2016–2020) began shifting the focus toward natural-language questions and conversational phrases, but traditional keyword signals remained foundational. Especially for local, product, and branded queries.

Even with the rise of generative AI and semantic understanding from 2020 onward, traditional keyword placement has not disappeared. Google, Gemini, Perplexity, Grok, ChatGPT Search, and other assistants still rely on clear keyword anchors to match user intent, disambiguate queries, and rank pages in retrieval-augmented generation pipelines. In 2026 many voice queries remain hybrid: users speak full questions but the underlying engine still matches against indexed keyword signals, entity-topic clusters, and exact phrases.

Today Traditional Keywords for voice and AI search includes these core dimensions:

- Exact-match & partial-match placement: Strategic use of primary keywords in title, H1, early paragraphs, and meta tags without stuffing.

- Semantic & LSI coverage: Related terms, synonyms, and topic clusters that reinforce main keywords and improve entity understanding.

- Long-tail & question keywords: Natural incorporation of full spoken queries ("best wireless earbuds under $100 2026") in headings and body text.

- Keyword density & distribution: Balanced presence across content sections without unnatural repetition or keyword cannibalisation.

- Voice-query alignment: Ensuring keywords match real spoken phrasing rather than just typed short-tail versions.

Traditional Keywords remain the anchor layer that ties everything together. Even the most advanced AI assistants need clear keyword signals to retrieve, rank, and cite pages accurately. A page can have perfect sentiment, structure, and AI visibility, but without strong traditional keyword optimisation it will struggle to match user intent and appear in relevant voice or AI results in 2026.

How it's Tested?

Traffic Torch computes Traditional Keywords client-side using a three-sub-metric model powered by compromise.js (NLP library) and simple text heuristics. The function requires at least 300 characters of readable text. Shorter content receives a flat low score of 20 with a clear warning to add more conversational phrases.

The overall score (0–100) is the simple average of three sub-metrics, with conditional boosts and penalties applied afterward. Here is exactly how each part is calculated:

-

Conversational Rankings Sim

Uses compromise.js to extract all question sentences from the text. Calculates the percentage of sentences that are questions. Scales aggressively (×10) so >10 % question-based content reaches near-full points. This simulates how well the page aligns with natural voice query phrasing. -

Long-Tail Density

Extracts clauses/phrases via compromise.js. Counts how many have 4+ words (approximating long-tail expressions). Calculates raw percentage and scales (×20) so >5 % long-tail density reaches near-full points. This rewards natural, specific phrasing over short keywords. -

Query Volume/Difficulty

Calculates average phrase/clause length across the text. Combines with an estimated commonality score (percentage of very common words like "the", "a", "and", etc.). Longer phrases + lower commonality (more unique terms) = higher score, simulating higher volume potential and lower difficulty for specific voice queries.

Additional adjustments:

- +10 points if more than 10 question sentences are detected or site type is 'blog' or 'news' (informational/question-heavy content performs better in voice rankings per research).

- –10 points if average phrase length falls below 4 words (penalises short, list-heavy content like basic e-commerce pages that miss voice intent).

Why it Matters?

In 2026 voice search dominates mobile queries and many informational lookups on smart devices. Traditional keyword signals have not been replaced. They remain the foundational layer that search engines and AI assistants use to match user intent, retrieve candidates, and rank pages before any semantic or generative processing occurs. Without strong keyword alignment your content will not even enter the consideration set for most queries.

Even advanced models like Google Gemini, Perplexity, Grok, ChatGPT Search, and Apple Intelligence rely on exact-match and long-tail keyword anchors to disambiguate ambiguous voice inputs, prioritise relevance, and decide which pages to pull into retrieval-augmented generation. Pages lacking clear keyword placement (in titles, headings, early content, and natural phrasing) are frequently outranked or ignored in favour of competitors that match the spoken query more directly.

Traditional Keywords bridge the gap between typed short-tail searches and spoken conversational queries. They ensure your page is indexed correctly, appears in autocomplete suggestions, powers local pack results, and serves as the initial hook for AI summarisation. Weak keyword optimisation creates a visibility floor. No matter how high your AI Visibility, Content Quality, Snippet Visibility, or Sentiment Quality scores are, poor keyword signals will limit reach across every engine and assistant.

Traditional Keywords are still the entry ticket to relevance in 2026. They anchor intent matching, retrieval, and ranking in both classic SERPs and the voice/AI-first world that now defines most user journeys.

Ready to see how well your page still matches real voice and typed queries?

Check Traditional Keywords on Traffic Torch →Conclusion & Next Steps – Master Voice Search in 2026

The five modules covered in this guide = AI Visibility, Content Quality, Snippet Visibility, Sentiment Quality, and Traditional Keywords form the complete 360° framework that Traffic Torch uses to evaluate and optimise any page for voice search and AI assistants in 2026.

Voice is no longer a side channel. It is the primary way billions of queries are expressed every day on mobile devices, smart speakers, wearables, and in-car systems. Google Gemini Live, Perplexity voice mode, Grok responses, ChatGPT Search voice, and Apple Intelligence are reshaping how users discover, trust, and act on information. Pages that score poorly across these five pillars are effectively invisible in this new reality.

The good news is that the fixes are actionable and often low-effort once diagnosed. Add Speakable and FAQPage schema to boost parseability. Rewrite key paragraphs for natural 40–60 word answers. Inject confident, positive tone with first-person pronouns. Place long-tail questions under clear headings. Strengthen entity salience and citation signals. Each small improvement compounds and Traffic Torch shows you exactly where to start based on real-time client-side analysis.

The future of organic visibility belongs to sites that are not just optimised for algorithms, but built to be spoken, summarised, trusted, and recommended by AI assistants. The tools and insights in this guide give you the roadmap. Now it is time to put them into action.

Ready to run your full Voice Search audit?

Get your instant 360° health score across all five modules + competitive gap analysis + AI-generated fixes. No login. No tracking. 100 % client-side.

Start Free AI Voice Search Audit Now →Updated March 2026 – based on ongoing correlation research, voice query trends, and real-time testing across major AI assistants.